Not registered? - Request an account here

RASM2018 ICFHR2018 Competition on Recognition of Historical Arabic Scientific Manuscripts

Download

All ground truth resources created for RASM competitions are freely available under an open license for anyone wishing to advance the state-of-the-art in text recognition technology. Download here:

Overview

The British Library’s collection of Arabic manuscripts is internationally recognised as one of the largest and finest in Europe and North America, comprising almost 15,000 works in some 14,000 volumes. Since 2012, the Library, in partnership with The Qatar Foundation and Qatar National Library, has digitised and made freely available over 950,000 images and counting, featuring the cultural and historical heritage of the Gulf and wider region, on Qatar Digital Library (QDL).

Ranging from the early eighth century CE to the nineteenth century, the manuscripts are drawn from both Arab countries and other countries with Arab or Muslim communities including India, China, Indonesia, Malaysia, and West Africa, and they display fascinating variations in style and script.

As part of this project we would like to pose a challenge focussing on finding an optimal solution for accurately and automatically transcribing our vast and growing digital archive of historical Arabic scientific handwritten manuscripts within the QDL. Our aim is to improve accessibility of this rich content by enabling full-text search and discovery, as well as enabling large-scale text analysis.

Context

Cultural heritage institutions around the world are digitising vast archives containing hundreds of thousands of pages of historical manuscript collections in Arabic script and the ability to recognise such offline Arabic handwriting has the potential to truly transform research.

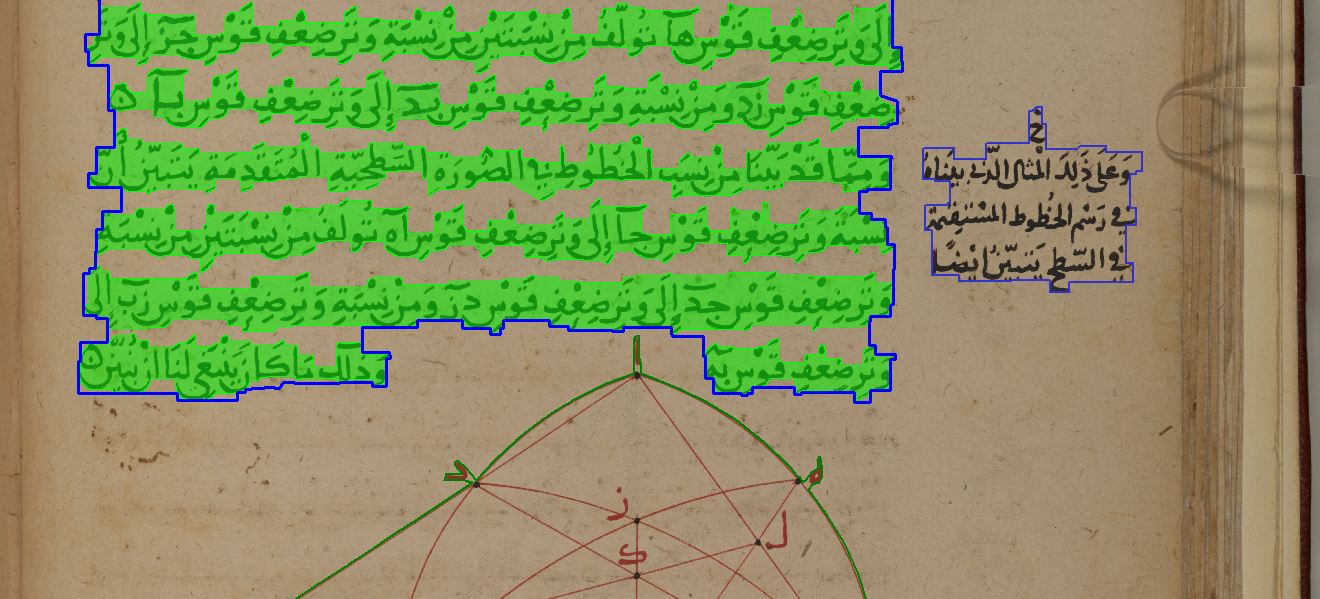

Arabic script, however, presents unique challenges for text line segmentation and baseline detection, essential pre-processing steps of optical character recognition. Arabic script writing styles are varied, characters are written in cursive, joined right to left, do not comprise capital letters, may take 2 to 4 shapes, and each is context sensitive. The shape of each of the 28 Arabic characters for instance may change drastically depending on their location in the word while the existence of non-joining characters means that although the script is cursive, they do not join to the following letter resulting in a small space within a word. Long strokes along the baseline, the complex combination of ascenders, descenders, diacritics, and special notation either above or below the baseline depending on the character pose further challenges.

Dataset

Please email us at rasm2018@primaresearch.org to get access to the competition datasets (example set with ground truth and evaluation set images).

The dataset to be used in this competition will be newly constructed and will exemplify the typical challenges in layout analysis and text recognition outlined above and found in historical Arabic manuscripts. We have chosen to focus on the Arabic scientific manuscripts on Qatar Digital Library in particular as they exhibit marginalia and diagrams, two common characteristics across the digital archive requiring a recognition solution.

Participants will be privided with:

- An example dataset of 10-15 original TIFF images and associated ground truth in PAGE format.

- A further 50-80 original TIFF images as part of the evaluation set. The ground truth for the evaluation set will be published after the competition.

Following the run of the competition the dataset created (images and ground truth) will be made freely available via the British Library’s data portal, TC10/TC11 Online Resources, as well as part of the IMPACT Centre of Competence Image and Ground Truth data repository to support and encourage further research in this ground-breaking area.

Submission protocol and evaluation methodology

Participating systems will be evaluated in different stages (main text block detection / segmentation, textline detection, text recognition) according to how far their methods are applicable within the analysis and recognition workflow – not all participating systems have to be end-to-end applications.

Participants will be expected to submit a short description of method (250 words), segmentation/recognition results in PAGE format and an executable.

Participants will be provided with a number of tools developed by PRImA that can be used in order to prepare and optimise their method(s) for submission (as well as to examine the example set in detail). They will also be supported in implementing the required output format by means of a PAGE exporter class and additional information about the underlying XML Schema.

The competition will use the comprehensive evaluation approach successfully employed in recent ICDAR competitions. It has been recently extended to perform text-based evaluation (e.g. for OCR) as well. As a whole, it takes into account a wide range of situations and provides considerable details on the performance of different methods. Each type of error is weighted according to the type of regions involved and the situation they are found. The evaluation tools used are freely available from the PRImA website.

In addition to the accuracy of their results, the submitted systems will also be evaluated on the scalability of their proposed solution to be implemented across the entire collection (as described earlier).

Participants will be encouraged to present their algorithms (if not already done) in a conference paper at ICFHR2018. The winning entry will also be invited to write a short article for the British Library website describing their work.

Participants will be encouraged to share their algorithms and codes using one of the open-source licenses.

Additional information

The ICFHR2018 Recognition of Historical Arabic Handwritten Manuscripts Competition (RASM2018) follows the format of previous successful competitions (including ICDAR Layout Analysis and Recognition competitions 2001, 2003, 2005, 2007, 2009, 2011, 2013, 2015, and 2017). The proposed competition will build upon the challenges of the previous competitions, with a new unique dataset and an end-to-end workflow scenario.

Participants will be provided with a number of tools developed by PRImA that can be used in order to prepare and optimise their method(s) for submission (as well as to examine the example set in detail). They will also be supported in implementing the required output format by means of PAGE exporter modules (C++ or Java) and additional information about the underlying XML Schema.